Difference between revisions of "Inter-Organizational Workflow"

| Line 1: | Line 1: | ||

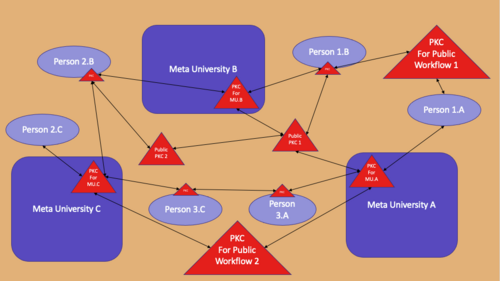

[[PKC Workflow]] is the data transforming | [[PKC Workflow]] is the data transforming process (a data manipulation program), conducted by a number of authenticated, and authorized [[PKC]]s (shown as the red triangles in the attached diagram), that integrates all personal users, [[MU]]-compliant organizations, using the common data formats and data transports made available through [[PKC]]. It takes inputs from one or more instance of [[PKC]], and pass on data content to the next [[PKC]]. Therefore, it is considered to be a [[workflow]]. [[File:UnifyingDataWorkflow.png|500px|thumb|PKC Workflow connecting persons and institutions]] In other words, [[PKC]] packages a number of industry-strength data containerization technologies, that acts as the workflow engine to execute relevant data exchange activities across social boundaries of individuals and organizations. It is necessary to point out that [[PKC]] is implemented as a new breed of [[data appliance]] that can include any number of database, data security, and data transport functionalities in principle. By standardizing one unified [[data appliance]] standard, enabling workflows across different social boundaries becomes relatively easy. At the time of this writing, a significant amount of businesses are already conducted using similar technologies, while assuming that running automated data services requires dedicated professional team members. [[PKC]] differs from that assumption by publishing the operational procedures, as well as the source code required to provision these data services as [[learnable activities]]. Therefore, competent individuals or organizations that can conduct these technical work can simply follow the published procedure to offer these services on the accumulated knowledge of the [[PKC]] user community. Therefore, [[PKC Workflow]] can also be thought of as: [[Inter-organizational Workflow]], [[MU Community Workflow]], or [[PKC DevOps Cycle]]. | ||

Due to the inter-organization nature of the workflow, the essence of its functionalities are mostly related to [[Federated Identity Management]], or [[authentication]] and [[authorization]] protocols, such as [[OAuth]] is necessary to get it to work online. | Due to the inter-organization nature of the workflow, the essence of its functionalities are mostly related to [[Federated Identity Management]], or [[authentication]] and [[authorization]] protocols, such as [[OAuth]] is necessary to get it to work online. | ||

Revision as of 15:36, 19 February 2022

PKC Workflow is the data transforming process (a data manipulation program), conducted by a number of authenticated, and authorized PKCs (shown as the red triangles in the attached diagram), that integrates all personal users, MU-compliant organizations, using the common data formats and data transports made available through PKC. It takes inputs from one or more instance of PKC, and pass on data content to the next PKC. Therefore, it is considered to be a workflow.

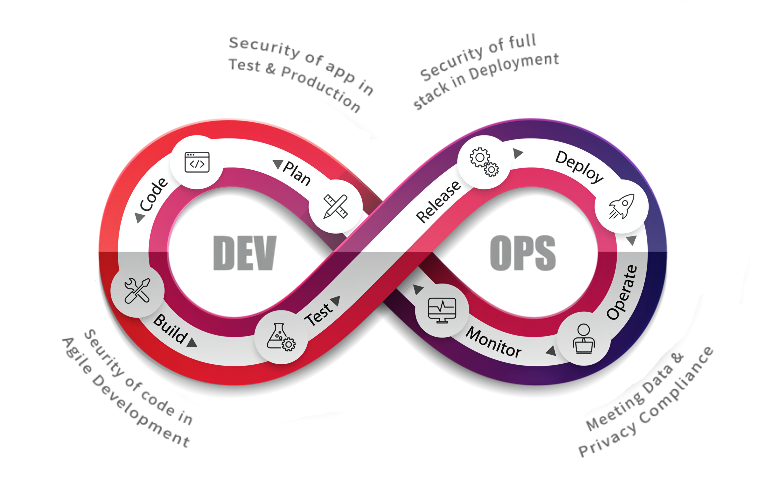

In other words, PKC packages a number of industry-strength data containerization technologies, that acts as the workflow engine to execute relevant data exchange activities across social boundaries of individuals and organizations. It is necessary to point out that PKC is implemented as a new breed of data appliance that can include any number of database, data security, and data transport functionalities in principle. By standardizing one unified data appliance standard, enabling workflows across different social boundaries becomes relatively easy. At the time of this writing, a significant amount of businesses are already conducted using similar technologies, while assuming that running automated data services requires dedicated professional team members. PKC differs from that assumption by publishing the operational procedures, as well as the source code required to provision these data services as learnable activities. Therefore, competent individuals or organizations that can conduct these technical work can simply follow the published procedure to offer these services on the accumulated knowledge of the PKC user community. Therefore, PKC Workflow can also be thought of as: Inter-organizational Workflow, MU Community Workflow, or PKC DevOps Cycle.

Due to the inter-organization nature of the workflow, the essence of its functionalities are mostly related to Federated Identity Management, or authentication and authorization protocols, such as OAuth is necessary to get it to work online.

Introduction

The purpose of PKC Workflow, or the PKC DevOps Cycle, is about leveraging well-known data processing tools to manage source code, binary files in a unifying abstraction framework. A framework that treats data in three types of universal abstractions.

- File from the Past: All data collected in the past can be bundled in Files.

- Page of the Present: All data to be interactively presented to data consuming agents are shown as Pages.

- Service of the Future: All data to be obtained by other data manipulation processes are known as Services.

By managing the classifying data assets in terms of files, pages, and services using version control systems, we can incrementally sift through data in three namespaces that intuitively labels the nature of data according to time progression, which reflects the partially ordered nature of PKC Workflow. This approach to iteratively cleanse data is also known as DevOps cycles.

Given the above-mentioned assumptions, data assets can be organized according to these three types in the following way:

- Files can be managed using Content-addressing networks, their content will not be changeable, since they can be stored in IPFS format.

- Pages are composed of data content, style templates, and UI/UX code. This requires certain test and verification procedure to endure their healthy operations. The final delivery of data content presentation often needs to be dynamically adjusted to users' device and the operating context of the device. Therefore, pages are considered to be deployed data assets.

- Services are programs that have well-known names and should be provisioned on computing devices with service quality assessment. They are usually associated with Docker-like container technologies and have published names registered in places like Docker hub. As service provisioning technologies mature, tools such as Kubernetes will be managing services with service quality real-time updates, so that the behavior of data services can be tracked and diagnosed with industry-strength protocols.

PKC Workflow in a time-based naming convention

Given the complexity of possible data deployment scenarios, we will show that all these complexity can be compressed down to a unifying process abstraction, all approximated using PKC as a container for various recipes to cope with different use cases by treating all phenomenon in terms of time-stamped data content. Then, PKC provides an extensible dictionary to continuously define the growing vocabulary, at the same time, provides computable representations to solutions, given that these computing results are deployed using computing services that knows how many users and how much data processing capacity that it can mobilize to accomplish its demand. In other words, under this three-layered (File, Page, Service) classification system, PKC workflow provides a fixed grounding metaphor, (Past, Present, Future) to deal with all data.

PKC Devops Strategy

There will be three public domains to test the workflow:

- pkc-dev.org for developmental tests

- pkc-ops.org for candidate service deployment

- pkc-back.org for production data backup data demonstration

Task 1

Upgrade current container to Mediawiki 1.35 to latest stable version [1.37.1], Ref, check for compatibility with related extension, and create pre-built container image to local machine

- Matomo Spatial Feature to update

- Install Youtube extension

Task 2

Implement the container into pkc-mirror.de, ensure to work with ansible script and download the pre-built image into cloud machine. Ensure it is working with Matomo, and Keycloak.

Task 3

Import content from pkc.pub, and try to convert EmbeddVideo into Youtube Extension, or find newer extension to support Video Embedding.

Task 4

Connecting PKC Local Installation to use confederated account to pkc-mirror.de Keycloak instances, and test for functionality.

Task 5

Think of using blockchain infrastructures, especially inter-chain/parachain infrastructures, such as Polkadot{.js} to implement inter-organizational governance.

Please see the details of the process in this page. For known issues log, please refer to Devops Known Issues

Notes

- When writing a logic model, one should be aware of the difference between concept and instance.

- A logic model is composed of lots of submodels. When not intending to specify the abstract part of them, one could only use Function Model.

- What is the relationship between the model submodules, and the relationships among all the subfunctions?

- Note: Sometimes, the input and process are ambiguous. For example, the Service namespace is required to achieve the goal. It might be an input or the product along the process. In general, both the input and process contain uncertainty and need a decision.

- The parameter of Logic Model is minimized to its name, which is the most important part of it. The name should be summarized from its value.

- Note that, when naming as Jenkins, it means the resource itself, but when naming as Jenkin Implementation On PKC, it consists of more context information therefore is more suitable.

- I was intending to name "PKC Workflow/Jenkins Integration" for the PCK Workflow's submodel. However, a more proper name might be Task/Jenkins Integration, and then take its output to PKC Workflow/Automation. The organization of the PKC Workflow should be the project, and the Workflow should be the desired output of the project. The Task category is for moving to that state. So the task could be the process of a Project, and the output of the task could serve as the process of the workflow.

- Each goal is associated with a static plan and dynamic process.

- To specify input and output from a logic model, we could get the input/output on every subprocess in the process (by transclusion)

- I renamed some models

- TLA+ Workflow -> System Verification

- Docker Workflow -> Docker registry

- Question: How should we name? Naming is a kind of summarization that loses information.